5 Google Search Console Features You Need to Explore

Before I dive into what everyone needs to know about the Google Search Console, let me give you a little backstory. Google Search Console (previously Google Webmaster Tools) is a no-charge web service by Google for webmasters. It allows webmasters to check indexing status and optimize the visibility of their websites. As of May 20, 2015, Google rebranded Google Webmaster Tools as Google Search Console.

Over the years, Search Console has become more important to webmasters as well as the Search Engine Optimization (SEO) community. The best way to familiarize yourself with Search Console is to dive right in and create a free account. The more time you spend exploring Search Console, the easier it will be to understand how search engines perceive and configure websites.

Below you’ll find a selection of my favorite, most useful Search Console features and what they can do for you.

1) Search Traffic: Search Analytics

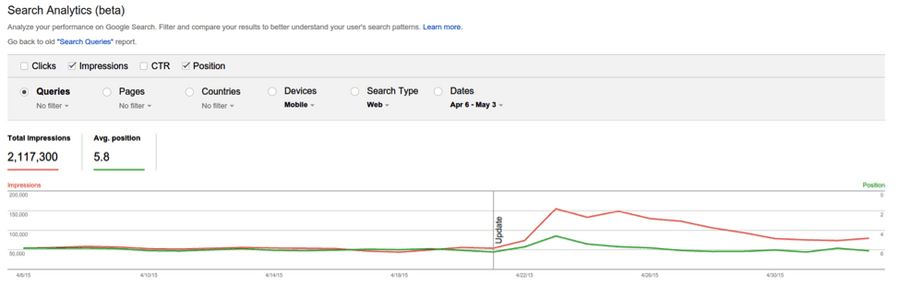

Search Analytics is hands-down the #1 best part of the Search Traffic Section, let alone all of Search Console. It provides critical search metrics for your website including impressions, clicks, click-through rates (CTR), and positions (rankings).

That data can also be filtered out in multiple dimensions including queries, pages, countries, devices, and more. SEO geeks, make sure to check out the Queries section. This section identifies organic keywords that people are searching to get to your site.

2) Search Appearance: HTML Improvements

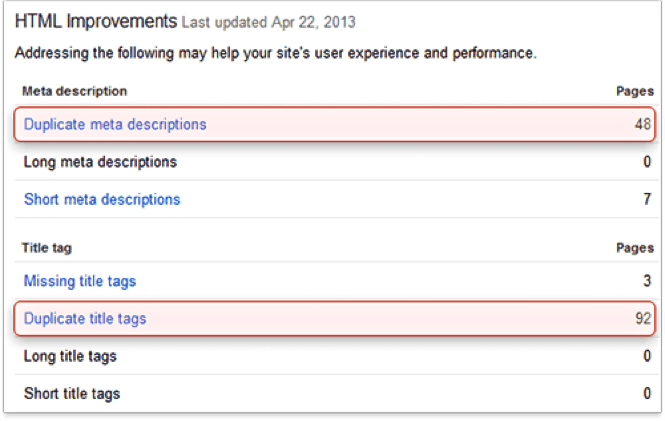

The HTML Improvements section not only helps to improve Search Engine Result Page (SERP) display, but it also helpfully points out SEO issues on your site. Here’s a brief rundown of common SEO issues that Search Console can identify:

Duplicate Content

Duplicate content is content that appears on the Internet in more than one place. When there are multiple pieces of identical content on the Internet, it is difficult for search engines to decide which version is more relevant to a given search query.

Missing Metadata (Title Tags & Meta Descriptions)

The title tag is an HTML title element critical to both SEO and user experience that is used to briefly and accurately describe the topic and theme of an online document. The title tag is displayed in two key places: the top bar of Internet browsers and on the search engine result page.

Meta descriptions are HTML attributes that provide concise explanations of the contents of web pages. Meta descriptions are commonly used on search engine result pages (SERPs) to display preview snippets for a given page.

Over-Optimized or Under-Optimized Metadata

Search Console identifies title tags and meta descriptions that do not follow Google’s guidelines. SEO best practices state that title tags should be no more than 60 characters while meta descriptions should be no more than 150 characters.

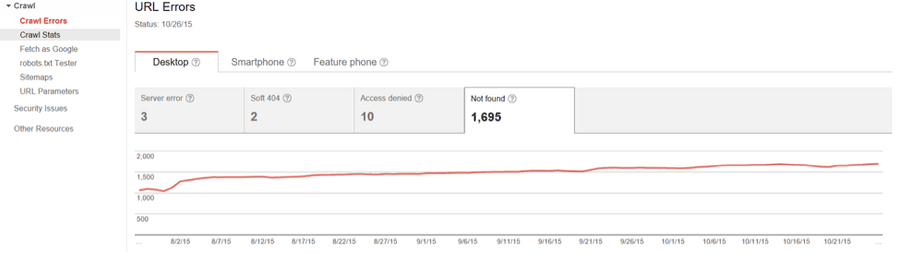

3) Crawl: Crawl Errors

The Crawl Section of Search Console covers a large portion of your website’s health. The Crawl Errors report for websites provides details about the site URLs that Google could not successfully crawl or that returned an HTTP error code. For example, any broken link on your website will show up as “Not Found.” This means that it is broken, or 404’ed. After using Search Console to identify broken links on your site, you can simply set up a 301 redirect so users are re-directed to the correct page instead of a dead link.

This section also shows the main issues from the past 90 days that prevented Googlebot from accessing your entire site. Just click any box to display its individual chart to see more information about the categories below:

- DNS errors—The Domain Name System, which translates the name of your site to a numerical address for the server that provides your website files.

- Server errors—Your host provider’s server functionality, which includes information on things like connection reliability and speed.

- Robots.txt failure—The file that Google must first access before it can crawl your site. It tells Google which pages it can and cannot access.

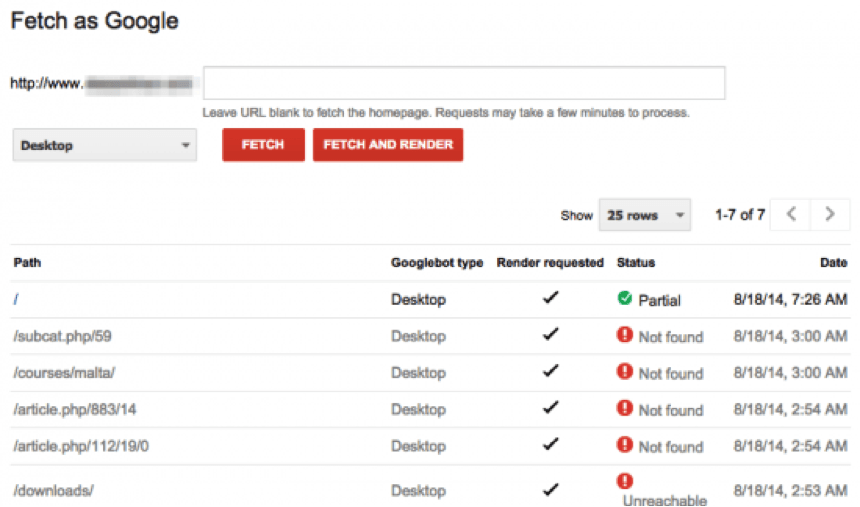

4) Crawl: Fetch As Google

Fetch as Google is an essential tool that ensures your pages are SEO-friendly (or at least Google-friendly). Every page on your site is crawled by Google to be published, or indexed, on the SERP. As you continue to make on-page optimizations for SEO (title tag changes, content changes, and more) you will need to analyze the URL with Fetch as Google to verify that it is Google-readable.

Fetch as Google communicates with search engine bots that your page is ready to be indexed and it even expedites that process. Fetch should often be used for site upgrades, URL migrations, breaking important news, and launching batches of new content. Note that hitting the “Submit to index” button does not guarantee a URL will get indexed, but does help get content in the SERPs faster.

Fetch is also helpful because it can indicate when a site cannot be crawled due to rendering errors, like being blocked by robots.txt or coding errors.

5) Crawl: Sitemaps & Robots.txt Tester

An XML sitemap is a document that helps Google and other major search engines better understand your website while crawling. The Sitemaps Section is where you test new sitemaps, or submit one to be crawled. Remember–Google will not index any of your web pages without the sitemap.

Along with Sitemaps, the robots.txt is a text file webmasters create to instruct robots (typically search engine bots) how to crawl and index pages on their website. Search Console’s robots.txt tester can be used to see if a URL is blocked, or disallowed, by robots.txt.

—

Now that you know what features to try out on Google Search Console, go forth and get familiar with your site. Are you a Search Console novice or genius? Do you have any favorite features? Share them with us! Tweet at us @Perfect_Search or email us at info@perfectsearchmedia.com.